# ME

let's focus on biology for a moment. neurons and synapses. inputs and outputs and everything in between. explain how it works, how are inputs determined and outputs resolved? genetic blueprints? flipping into a special kind of cell?

# GPT

Alright, let’s zoom all the way out and do this **biologically clean**, no metaphors first, then map intuition at the end.

---

## What a neuron _is_ in biology (mechanistically)

A neuron is **not** an input/output device in the computer sense.

It is a **biophysical decision-making cell** whose behavior emerges from:

- ion channels embedded in its membrane

- a dendritic tree that integrates signals spatially and temporally

- a spike-generation mechanism (the axon hillock)

- long-term molecular changes that alter future behavior

There is **no central controller** deciding “this neuron is an input” or “this neuron is an output.”

That role emerges from **where the neuron is, what genes it expresses, and who it connects to**.

---

## 1. Genetic blueprints: what genes actually specify

Genes do **not** encode:

- specific inputs

- specific outputs

- specific thoughts

- specific wiring diagrams

Genes encode **biases and constraints**, not solutions.

They specify things like:

- which ion channels the neuron expresses

- how excitable it is

- how fast it adapts

- what kinds of molecules it responds to

- how likely it is to grow axons vs dendrites

- what chemical “tags” it presents for connection compatibility

Think of genes as defining a **cell type manifold**, not a role.

Example:

- “Express sodium channels A, potassium channels B”

- “Grow long axon, thin dendrites”

- “Prefer glutamatergic synapses”

- “Respond strongly to neuromodulator X”

That’s it.

No gene says: _“You are motor neuron #27.”_

---

## 2. Neuron types (why specialization exists)

There **are** neuron classes, but they’re coarse-grained:

- excitatory pyramidal neurons

- inhibitory interneurons (many subtypes)

- sensory receptor neurons

- motor neurons

These are determined genetically, but still not “roles” in a computational sense.

A motor neuron is “motor” because:

- it sends its axon out of the CNS

- it connects to muscle fibers

- it releases acetylcholine

- it has a large soma and fast conduction

Not because it was assigned “output = move arm.”

---

## 3. Inputs: how a neuron knows what to listen to

### Dendrites are the key

A neuron receives inputs on **dendrites**, which are:

- spatially structured

- electrically lossy

- biochemically active

Each synapse:

- sits at a specific location on the dendrite

- has its own strength

- has its own timing

- can be excitatory or inhibitory

Inputs are not “numbers.”

They are **ionic currents over time**, shaped by:

- synapse type

- distance to soma

- dendritic nonlinearities

- recent activity

Two inputs arriving 1 ms apart on nearby dendrites can matter more than two strong inputs far apart in time or space.

---

## 4. Integration: how “computation” happens

Neurons do **not** sum inputs linearly.

They perform:

- temporal integration (over ms–tens of ms)

- spatial integration (across dendritic branches)

- nonlinear amplification (NMDA spikes, dendritic spikes)

- inhibition-driven gating

The neuron is constantly asking:

> “Is there a _coherent pattern_ of activity right now that matters?”

That coherence is defined by:

- timing

- location

- past history

There is no global clock.

---

## 5. Output: how a neuron decides to act

### Action potentials (spikes)

If the integrated membrane potential at the **axon initial segment** crosses threshold:

- voltage-gated sodium channels open

- the neuron fires a spike

- that spike travels down the axon

That’s it.

The neuron does not know:

- who it is signaling

- what the spike “means”

- whether the spike is correct

It only knows: _threshold crossed_.

---

## 6. Synapses: where learning actually lives

Synapses are **not wires**. They are **adaptive molecular machines**.

Each synapse can change:

- how much neurotransmitter it releases

- how sensitive the post-synaptic receptors are

- whether it even exists

Learning happens primarily by:

- strengthening synapses that repeatedly contribute to spikes

- weakening or pruning synapses that don’t

This is local and selfish:

- synapse only “knows” pre activity, post activity, and chemical context

There is no global loss function.

---

## 7. How “inputs” and “outputs” _emerge_

This is the crucial part.

A neuron becomes an **input neuron** if:

- it receives signals from the outside world (retina, cochlea, skin)

- its activity is driven more by external energy than by other neurons

A neuron becomes an **output neuron** if:

- its axon connects to muscles or glands

- or to downstream structures that eventually do

Everything else is **intermediate**, even if highly specialized.

No neuron internally represents:

> “I am an input.”

Roles emerge from **connectivity + environment**.

---

## 8. Development: how the system wires itself

During development:

- neurons are born

- they migrate

- they grow axons and dendrites

- they form _too many_ synapses

Then:

- activity-dependent pruning removes most of them

- frequently used paths stabilize

- rarely used paths disappear

This is why:

- structure emerges from function

- not the other way around

Genes set the **initial conditions**.

Experience selects the **final circuit**.

---

## 9. Plasticity phases (critical insight for your system)

Biology uses **phases**, not uniform rules:

1. **Early phase**

- high plasticity

- low thresholds

- lots of growth

- permissive wiring

2. **Middle phase**

- specialization

- competition

- pruning

- myelination

3. **Late phase**

- stability

- slow adaptation

- protection of learned structure

You just re-discovered this experimentally.

Your “brittle dispersal” failure is exactly what happens when:

- early permissive conditions are removed too soon

- or never existed

---

## 10. The big truth (this ties directly to your project)

There is **no explicit notion of “input” or “output” in the brain**.

There are:

- cells with biases

- local rules

- physical constraints

- slow-changing tissue

- fast-changing synapses

Behavior emerges because:

- spikes propagate

- correlations stabilize

- structure locks in

---

## Mapping this back to what you’re building (briefly)

What you’re doing right:

- event-based signaling (spikes)

- coincidence detection

- sub-threshold learning

- migration and tissue

- synaptogenesis

What biology adds that you’re just now touching:

- **developmental phases**

- **critical periods**

- **different time constants for structure vs learning**

- **medium that resists and remembers**

Nothing you’re seeing is un-biological.

In fact, your system is failing in the _same ways biology fails_ when those layers are mis-timed.

If you want next:

- we can map each biological mechanism 1:1 to a minimal computational analog

- or talk specifically about _how a neuron “chooses” what to represent_

- or drill into one cell type (e.g., pyramidal neuron) in painful detail

You asked the right question.

# ME

what about butterflies? they turn into a genetic soup and then there are discs that become special parts of the new body. I want that. "become this" not "you are this"

# GPT

Yes. **That’s the right instinct**, and it’s closer to real biology than most “neural role” models ever get.

But let me clean up one important myth first, because the truth is even _better_ for what you want.

Butterflies do **not** turn into random genetic soup.

They enter a **controlled dissolution phase** where _most_ larval tissue is broken down, **but a set of pre-specified developmental programs survive intact** and then _expand_ to rebuild the organism.

Those programs are the key.

---

## What actually happens in metamorphosis

### 1. The larva is temporary infrastructure

The caterpillar is optimized for:

- eating

- growing mass

- storing energy

Its nervous system, muscles, gut, etc. are **scaffolding**, not destiny.

---

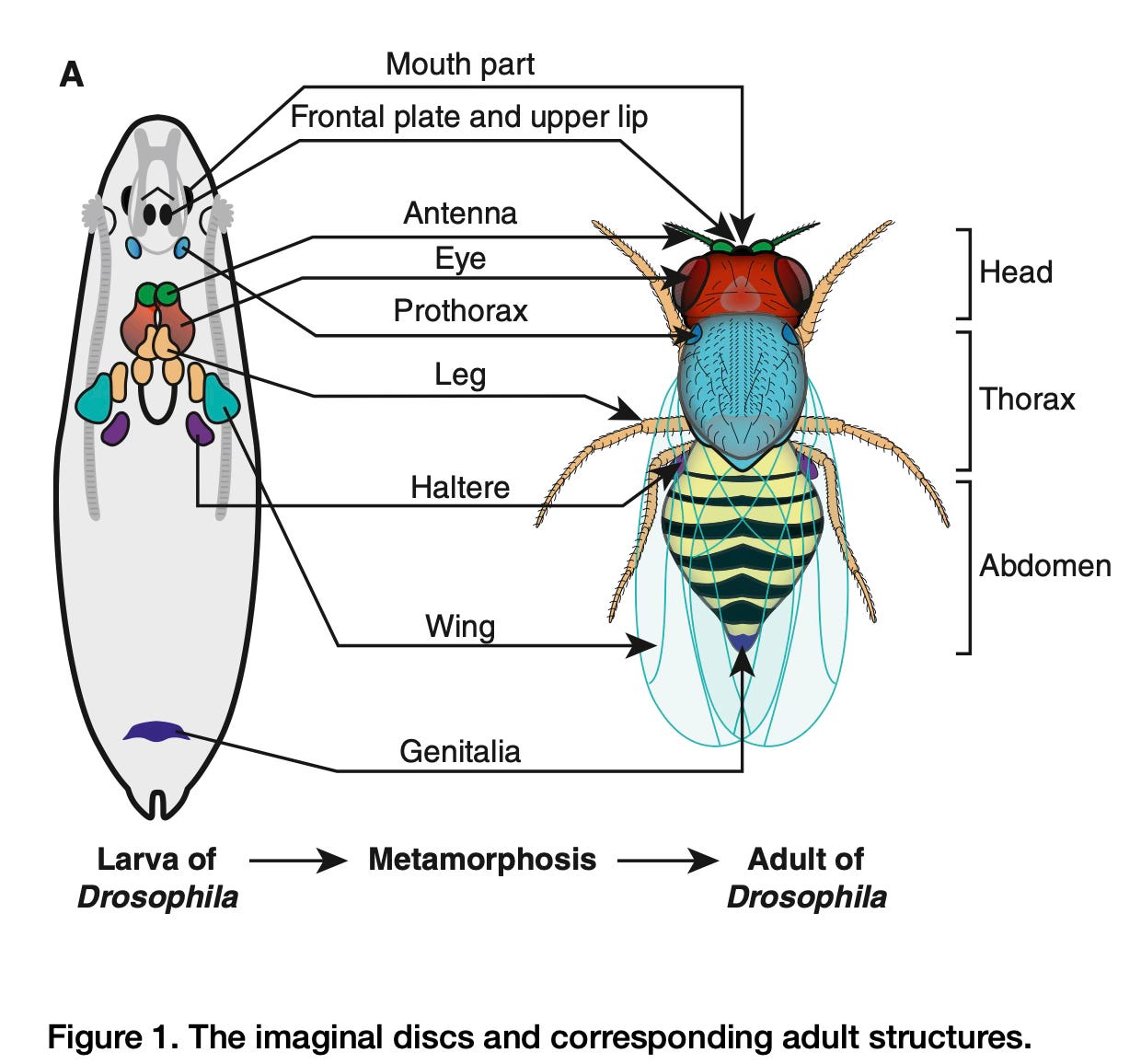

### 2. Imaginal discs: the “become this” programs

Inside the caterpillar are clusters of cells called **imaginal discs**.

Each disc is:

- genetically specified early

- mostly inactive during larval life

- resistant to dissolution

- fate-committed, but not yet executed

There are discs for:

- wings

- legs

- eyes

- antennae

- genitalia

- parts of the nervous system

Critically:

> **An imaginal disc is not a body part.

> It is a program that knows how to become one.**

No disc says “I am a wing.”

It says “if given the right conditions, I will _execute wing formation_.”

---

### 3. The “soup” phase is regulated, not chaos

During pupation:

- most larval cells undergo apoptosis (programmed death)

- tissues are digested into nutrients

- **imaginal discs are protected**

- hormonal signals (ecdysone) switch the developmental mode

The environment changes.

The rules change.

The same genome runs a _different program_.

This is the part you want.

---

### 4. Differentiation is conditional, not assigned

Imaginal discs respond to:

- positional signals

- morphogen gradients

- mechanical constraints

- interaction with neighboring discs

They don’t have a global coordinator.

They negotiate their final form **locally**.

---

## The deep pattern you’re reaching for

What butterflies prove is this:

> **Cells don’t carry roles.

> They carry latent programs that activate under specific global conditions.**

That’s the “become this” you’re talking about.

---

## How this maps _directly_ to neurons and brains

Brains do this too — just more subtly.

### Neural progenitors ≈ imaginal discs

Early in development:

- stem cells don’t know if they’ll be sensory, motor, or interneuron

- they know _biases_ (excitatory vs inhibitory, migration tendencies)

Context decides.

### Critical periods ≈ pupation windows

During certain phases:

- plasticity explodes

- structure reorganizes

- roles crystallize

Miss the window, and the role never fully forms.

---

## What you want in your system (translated precisely)

You do **not** want:

- “this neuron is motor”

- “this region is auditory”

You want:

### Latent identity programs

Each neuron (or cluster) carries:

- a _potential profile_

- a set of transformation rules

- thresholds for activation

Example:

> “If exposed to sustained downstream load + low sensory drive + high coincidence reliability → transition toward motor-like behavior.”

That’s an imaginal disc.

---

### A mode switch (your pupation trigger)

Butterflies don’t differentiate until:

- enough mass is accumulated

- hormones flip

- environment stabilizes

You need the same thing.

A global or semi-global signal like:

- “network coherence reached”

- “energy budget exceeded”

- “developmental epoch N reached”

- “plasticity pressure too high”

When that flips:

- learning rules change

- migration rules change

- synaptogenesis rules change

- identity programs unlock

---

### Dissolution without destruction

Important: imaginal discs survive because they are _protected_.

In your system:

- most weak synapses can dissolve

- many transient structures can collapse

- **latent identity programs must be preserved**

That means:

- some state must be immutable or slow-changing

- not everything is plastic

---

## Concrete implementation pattern (non-code, but exact)

Think in layers:

### Layer 1: Universal neurons

All neurons start equivalent:

- same firing rules

- same plasticity

- same migration permissions

### Layer 2: Latent programs

Each neuron carries a vector of dormant programs:

- “sensory-like”

- “intermediate-like”

- “motor-like”

- maybe others later

None active yet.

### Layer 3: Developmental trigger

A condition flips:

- time

- coherence

- pressure

- failure to resolve tasks

This is your **pupation signal**.

### Layer 4: Program execution

Neurons _transition_:

- thresholds shift

- output bias changes

- synaptogenesis preferences change

- migration constraints change

They are not reassigned.

They **become**.

---

## Why this fits what you already observed

The motor pack “flying inward”?

That was a **proto-disc activating without a phase boundary**.

You saw:

- a latent specialization

- trying to execute too early

- with no protective context

That’s why it looked brittle.

Butterflies only do that after the switch.

---

## The punchline (this is the core insight)

> **Roles should not be properties.

> Roles should be phase-dependent behaviors that emerge when conditions are met.**

That’s not sci-fi.

That’s straight biology.

If you want, next we can:

- design a minimal “imaginal program” system for neurons

- define what a pupation trigger looks like in your metrics

- or talk about how to safely allow partial differentiation and rollback

But you’re absolutely pointing at something _real_ — and powerful.