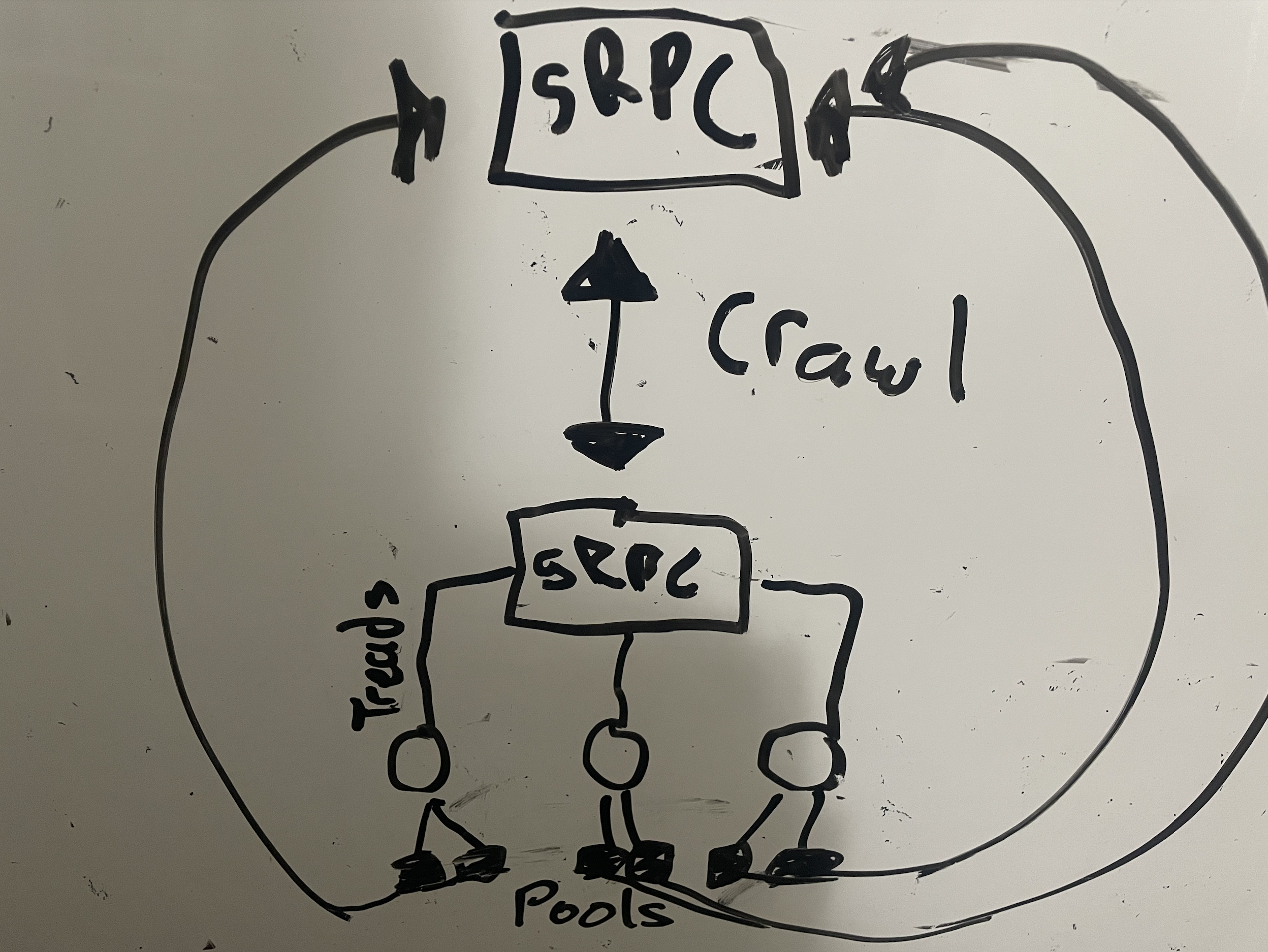

crawler

A gRPC web indexer turbo charged for performance.

Getting Started

Make sure to have Rust installed or Docker.

This project requires that you start up another gRPC server on port 50051 following the proto spec.

The user agent is spoofed on each crawl to a random agent and the indexer extends spider as the base.

cargo runordocker compose up

Installation

You can install easily with the following:

Cargo

The crate is available to setup a gRPC server within rust projects.

Docker

You can use also use the docker image at a11ywatch/crawler.

Set the CRAWLER_IMAGE env var to darwin-arm64 to get the native m1 mac image.

crawler:

container_name: crawler

image: "a11ywatch/crawler:${CRAWLER_IMAGE:-latest}"

ports:

- 50055

Node

We also release the package to npm @a11ywatch/crawler.

After import at the top of your project to start the gRPC server or run node directly against the module.

import "@a11ywatch/crawler";

About

This crawler is optimized for reduced latency and performance as it can handle over 10,000 pages within seconds.

In order to receive the links found for the crawler you need to add the website.proto to your server.

This is required since every request spawns a thread. Isolating the context drastically improves performance (preventing shared resources / communication ).

Help

If you need help implementing the gRPC server to receive the pages or links when found check out the gRPC node example for a starting point .

TODO

- Allow gRPC server port setting or change to direct url:port combo.

LICENSE

Check the license file in the root of the project.