Struct ax_banyan::Transaction

source · pub struct Transaction<T: TreeTypes, R, W> { /* private fields */ }Expand description

Everything that is needed to write trees. To write trees, you also have to read trees.

Implementations§

source§impl<T, R, W> Transaction<T, R, W>

impl<T, R, W> Transaction<T, R, W>

basic random access append only tree

source§impl<T: TreeTypes, R, W> Transaction<T, R, W>

impl<T: TreeTypes, R, W> Transaction<T, R, W>

sourcepub fn into_writer(self) -> W

pub fn into_writer(self) -> W

Get the writer of the transaction.

This can be used to finally commit the transaction or manually store the content.

pub fn writer(&self) -> &W

pub fn writer_mut(&mut self) -> &mut W

source§impl<T: TreeTypes, R, W> Transaction<T, R, W>

impl<T: TreeTypes, R, W> Transaction<T, R, W>

source§impl<T: TreeTypes, R: ReadOnlyStore<T::Link>, W: BlockWriter<T::Link>> Transaction<T, R, W>

impl<T: TreeTypes, R: ReadOnlyStore<T::Link>, W: BlockWriter<T::Link>> Transaction<T, R, W>

sourcepub fn pack<V: BanyanValue>(

&mut self,

tree: &mut StreamBuilder<T, V>

) -> Result<()>

pub fn pack<V: BanyanValue>( &mut self, tree: &mut StreamBuilder<T, V> ) -> Result<()>

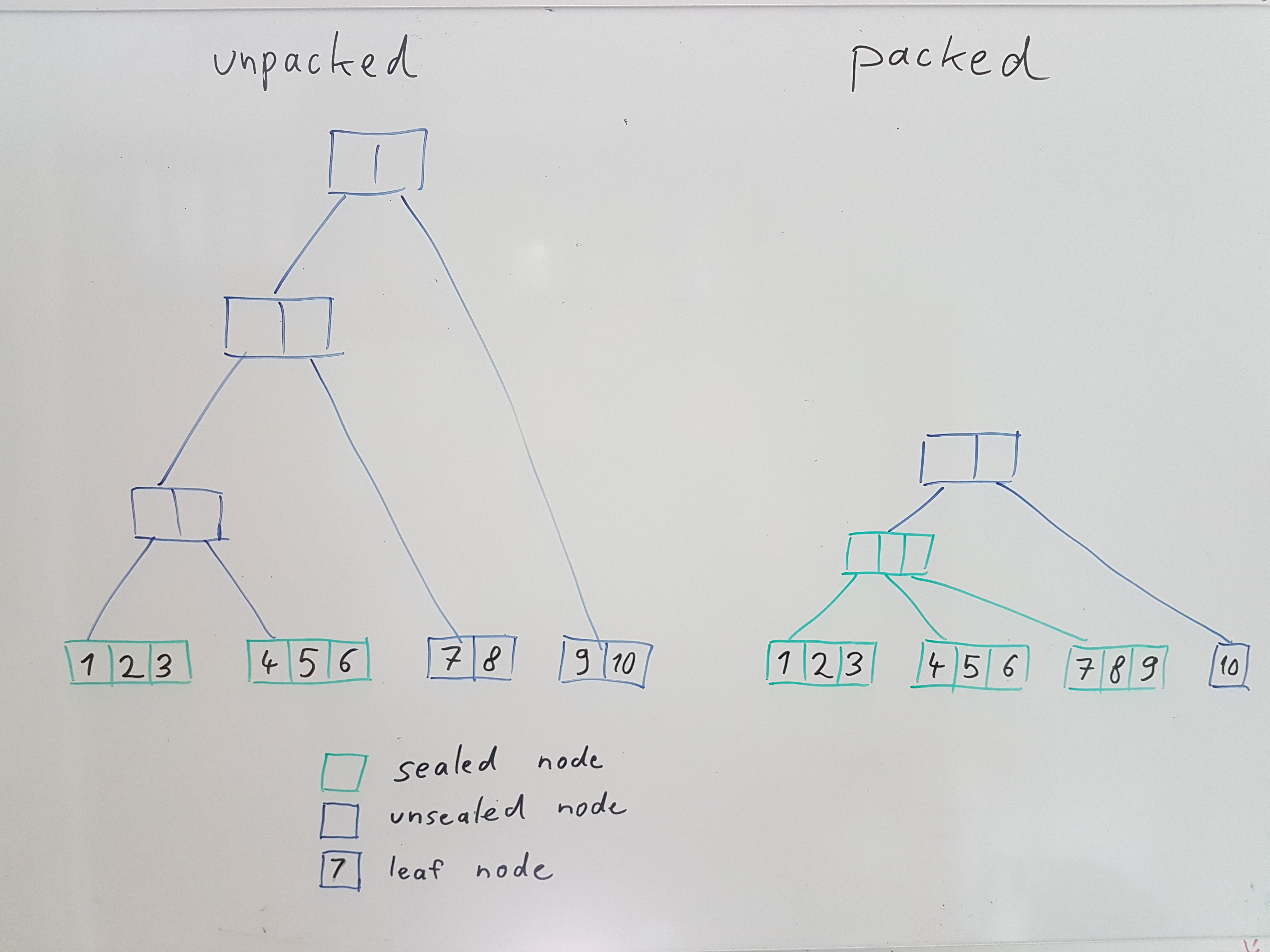

Packs the tree to the left.

For an already packed tree, this is a noop. Otherwise, all packed subtrees will be reused without touching them. Likewise, sealed subtrees or leafs will be reused if possible.

sourcepub fn push<V: BanyanValue>(

&mut self,

tree: &mut StreamBuilder<T, V>,

key: T::Key,

value: V

) -> Result<()>

pub fn push<V: BanyanValue>( &mut self, tree: &mut StreamBuilder<T, V>, key: T::Key, value: V ) -> Result<()>

append a single element. This is just a shortcut for extend.

sourcepub fn extend<I, V>(

&mut self,

tree: &mut StreamBuilder<T, V>,

from: I

) -> Result<()>

pub fn extend<I, V>( &mut self, tree: &mut StreamBuilder<T, V>, from: I ) -> Result<()>

extend the node with the given iterator of key/value pairs

sourcepub fn extend_unpacked<I, V>(

&mut self,

tree: &mut StreamBuilder<T, V>,

from: I

) -> Result<()>

pub fn extend_unpacked<I, V>( &mut self, tree: &mut StreamBuilder<T, V>, from: I ) -> Result<()>

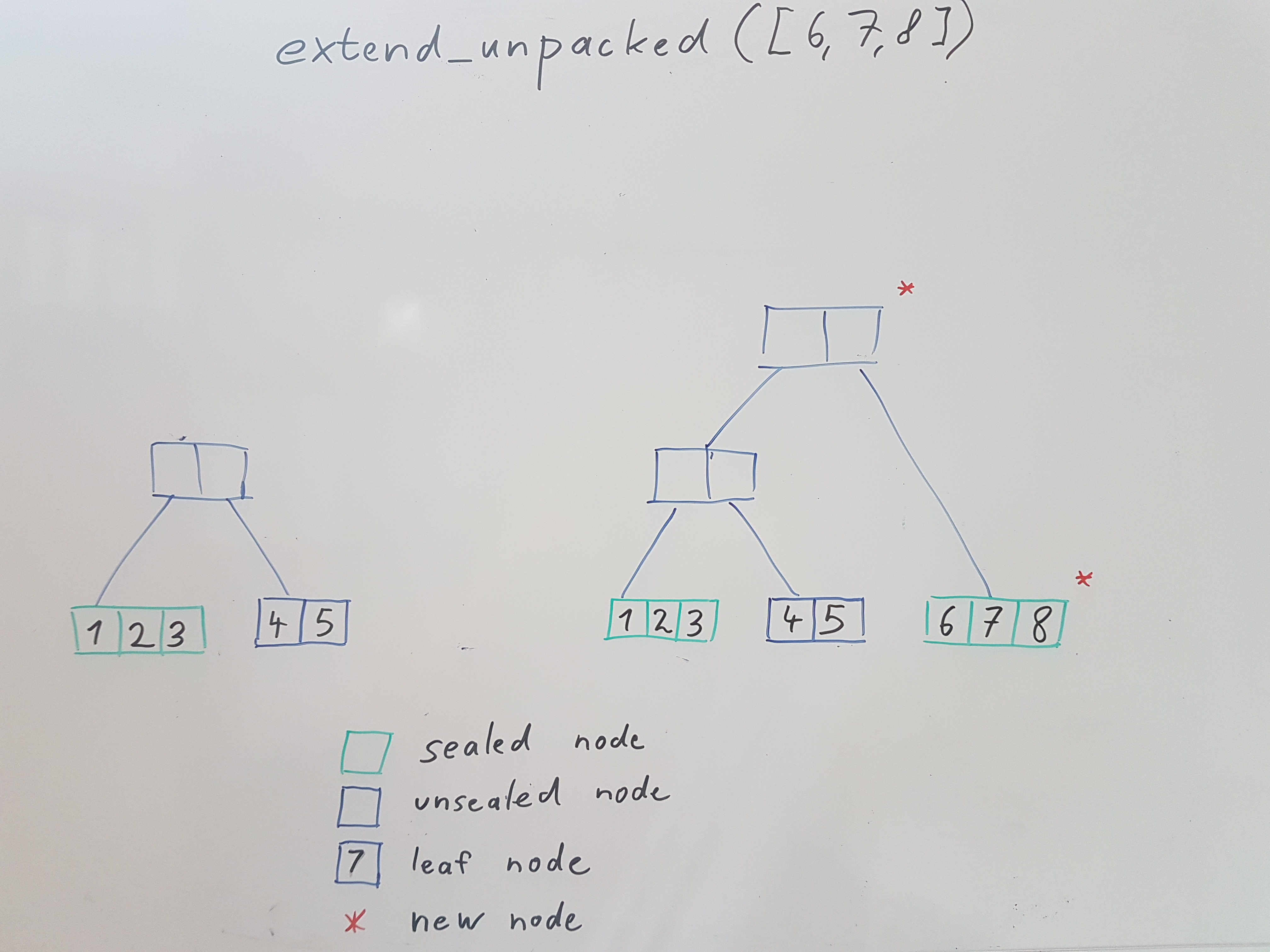

extend the node with the given iterator of key/value pairs

This variant will not pack the tree, but just create a new tree from the new values and join it with the previous tree via an unpacked branch node. Essentially this will produce a degenerate tree that resembles a linked list.

To pack a tree, use the pack method.

sourcepub fn retain<'a, Q: Query<T> + Send + Sync, V>(

&'a mut self,

tree: &mut StreamBuilder<T, V>,

query: &'a Q

) -> Result<()>

pub fn retain<'a, Q: Query<T> + Send + Sync, V>( &'a mut self, tree: &mut StreamBuilder<T, V>, query: &'a Q ) -> Result<()>

Retain just data matching the query

this is done as best effort and will not be precise. E.g. if a chunk of data contains just a tiny bit that needs to be retained, the entire chunk will be retained.

from this follows that this is not a suitable method if you want to ensure that the non-matching data is completely gone.

note that offsets will not be affected by this. Also, unsealed nodes will not be forgotten even if they do not match the query.

sourcepub fn repair<V>(

&mut self,

tree: &mut StreamBuilder<T, V>

) -> Result<Vec<String>>

pub fn repair<V>( &mut self, tree: &mut StreamBuilder<T, V> ) -> Result<Vec<String>>

repair a tree by purging parts of the tree that can not be resolved.

produces a report of links that could not be resolved.

Note that this is an emergency measure to recover data if the tree is not completely available. It might result in a degenerate tree that can no longer be safely added to, especially if there are repaired blocks in the non-packed part.

Methods from Deref<Target = Forest<T, R>>§

pub fn store(&self) -> &R

sourcepub fn stream_trees<Q, S, V>(

&self,

query: Q,

trees: S

) -> impl Stream<Item = Result<(u64, T::Key, V)>> + Send

pub fn stream_trees<Q, S, V>( &self, query: Q, trees: S ) -> impl Stream<Item = Result<(u64, T::Key, V)>> + Send

Given a sequence of roots, will stream matching events in ascending order indefinitely.

This is implemented by calling stream_trees_chunked and just flattening the chunks.

sourcepub fn stream_trees_chunked<S, Q, V, E, F>(

&self,

query: Q,

trees: S,

range: RangeInclusive<u64>,

mk_extra: &'static F

) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + Send + 'static

pub fn stream_trees_chunked<S, Q, V, E, F>( &self, query: Q, trees: S, range: RangeInclusive<u64>, mk_extra: &'static F ) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + Send + 'static

Given a sequence of roots, will stream chunks in ascending order until it arrives at range.end().

- query: the query

- roots: the stream of roots. It is assumed that trees later in this stream will be bigger

- range: the range which to stream. It is up to the caller to ensure that we have events for this range.

- mk_extra: a fn that allows to compute extra info from indices. this can be useful to get progress info even if the query does not match any events

sourcepub fn stream_trees_chunked_threaded<S, Q, V, E, F>(

&self,

query: Q,

trees: S,

range: RangeInclusive<u64>,

mk_extra: &'static F,

thread_pool: ThreadPool

) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + Send + 'static

pub fn stream_trees_chunked_threaded<S, Q, V, E, F>( &self, query: Q, trees: S, range: RangeInclusive<u64>, mk_extra: &'static F, thread_pool: ThreadPool ) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + Send + 'static

Given a sequence of roots, will stream chunks in ascending order indefinitely.

Note that this method has no way to know when the query is done. So ending this stream,

if desired, will have to be done by the caller using e.g. take_while(...).

- query: the query

- roots: the stream of roots. It is assumed that trees later in this stream will be bigger

- range: the range which to stream. It is up to the caller to ensure that we have events for this range.

- mk_extra: a fn that allows to compute extra info from indices. this can be useful to get progress info even if the query does not match any events

sourcepub fn stream_trees_chunked_reverse<S, Q, V, E, F>(

&self,

query: Q,

trees: S,

range: RangeInclusive<u64>,

mk_extra: &'static F

) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + Send + 'static

pub fn stream_trees_chunked_reverse<S, Q, V, E, F>( &self, query: Q, trees: S, range: RangeInclusive<u64>, mk_extra: &'static F ) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + Send + 'static

Given a sequence of roots, will stream chunks in reverse order until it arrives at range.start().

Values within chunks are in ascending offset order, so if you flatten them you have to reverse them first.

- query: the query

- trees: the stream of roots. It is assumed that trees later in this stream will be bigger

- range: the range which to stream. It is up to the caller to ensure that we have events for this range.

- mk_extra: a fn that allows to compute extra info from indices. this can be useful to get progress info even if the query does not match any events

pub fn transaction<W: BlockWriter<TT::Link>>( &self, f: impl FnOnce(R) -> (R, W) ) -> Transaction<TT, R, W>

pub fn load_stream_builder<V>( &self, secrets: Secrets, config: Config, link: T::Link ) -> Result<StreamBuilder<T, V>>

pub fn load_tree<V>( &self, secrets: Secrets, link: T::Link ) -> Result<Tree<T, V>>

sourcepub fn dump_graph<S, V>(

&self,

tree: &Tree<T, V>,

f: impl Fn((usize, &NodeInfo<T, R>)) -> S + Clone

) -> Result<(Vec<(usize, usize)>, BTreeMap<usize, S>)>

pub fn dump_graph<S, V>( &self, tree: &Tree<T, V>, f: impl Fn((usize, &NodeInfo<T, R>)) -> S + Clone ) -> Result<(Vec<(usize, usize)>, BTreeMap<usize, S>)>

dumps the tree structure

sourcepub fn roots<V>(&self, tree: &StreamBuilder<T, V>) -> Result<Vec<Index<T>>>

pub fn roots<V>(&self, tree: &StreamBuilder<T, V>) -> Result<Vec<Index<T>>>

sealed roots of the tree

sourcepub fn left_roots<V>(&self, tree: &Tree<T, V>) -> Result<Vec<Tree<T, V>>>

pub fn left_roots<V>(&self, tree: &Tree<T, V>) -> Result<Vec<Tree<T, V>>>

leftmost branches of the tree as separate trees

pub fn check_invariants<V>( &self, tree: &StreamBuilder<T, V> ) -> Result<Vec<String>>

pub fn is_packed<V>(&self, tree: &Tree<T, V>) -> Result<bool>

pub fn assert_invariants<V>(&self, tree: &StreamBuilder<T, V>) -> Result<()>

pub fn stream_filtered<V: BanyanValue>( &self, tree: &Tree<T, V>, query: impl Query<T> + Clone + 'static ) -> impl Stream<Item = Result<(u64, T::Key, V)>> + 'static

sourcepub fn iter_index<V>(

&self,

tree: &Tree<T, V>,

query: impl Query<T> + Clone + 'static

) -> impl Iterator<Item = Result<Index<T>>> + 'static

pub fn iter_index<V>( &self, tree: &Tree<T, V>, query: impl Query<T> + Clone + 'static ) -> impl Iterator<Item = Result<Index<T>>> + 'static

Returns an iterator yielding all indexes that have values matching the provided query.

sourcepub fn iter_index_reverse<V>(

&self,

tree: &Tree<T, V>,

query: impl Query<T> + Clone + 'static

) -> impl Iterator<Item = Result<Index<T>>> + 'static

pub fn iter_index_reverse<V>( &self, tree: &Tree<T, V>, query: impl Query<T> + Clone + 'static ) -> impl Iterator<Item = Result<Index<T>>> + 'static

Returns an iterator yielding all indexes that have values matching the provided query in reverse order.

pub fn iter_filtered<V: BanyanValue>( &self, tree: &Tree<T, V>, query: impl Query<T> + Clone + 'static ) -> impl Iterator<Item = Result<(u64, T::Key, V)>> + 'static

pub fn iter_filtered_reverse<V: BanyanValue>( &self, tree: &Tree<T, V>, query: impl Query<T> + Clone + 'static ) -> impl Iterator<Item = Result<(u64, T::Key, V)>> + 'static

pub fn iter_from<V: BanyanValue>( &self, tree: &Tree<T, V> ) -> impl Iterator<Item = Result<(u64, T::Key, V)>> + 'static

pub fn iter_filtered_chunked<Q, V, E, F>( &self, tree: &Tree<T, V>, query: Q, mk_extra: &'static F ) -> impl Iterator<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + 'static

pub fn iter_filtered_chunked_reverse<Q, V, E, F>( &self, tree: &Tree<T, V>, query: Q, mk_extra: &'static F ) -> impl Iterator<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + 'static

pub fn stream_filtered_chunked<Q, V, E, F>( &self, tree: &Tree<T, V>, query: Q, mk_extra: &'static F ) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + 'static

pub fn stream_filtered_chunked_reverse<Q, V, E, F>( &self, tree: &Tree<T, V>, query: Q, mk_extra: &'static F ) -> impl Stream<Item = Result<FilteredChunk<(u64, T::Key, V), E>>> + 'static

sourcepub fn get<V: BanyanValue>(

&self,

tree: &Tree<T, V>,

offset: u64

) -> Result<Option<(T::Key, V)>>

pub fn get<V: BanyanValue>( &self, tree: &Tree<T, V>, offset: u64 ) -> Result<Option<(T::Key, V)>>

element at index

returns Ok(None) when offset is larger than count, or when hitting a purged part of the tree. Returns an error when part of the tree should be there, but could not be read.

sourcepub fn collect<V: BanyanValue>(

&self,

tree: &Tree<T, V>

) -> Result<Vec<Option<(T::Key, V)>>>

pub fn collect<V: BanyanValue>( &self, tree: &Tree<T, V> ) -> Result<Vec<Option<(T::Key, V)>>>

Collects all elements from a stream. Might produce an OOM for large streams.

sourcepub fn collect_from<V: BanyanValue>(

&self,

tree: &Tree<T, V>,

offset: u64

) -> Result<Vec<Option<(T::Key, V)>>>

pub fn collect_from<V: BanyanValue>( &self, tree: &Tree<T, V>, offset: u64 ) -> Result<Vec<Option<(T::Key, V)>>>

Collects all elements from the given offset. Might produce an OOM for large streams.